Quantir Whitepaper

Whitepaper | Quantir UI Walkthrough | Use Cases | DeFi Risk Engine | Roadmap

1. Executive Summary

Quantir is a DeFi risk-intelligence platform built for users whose cost of delayed reaction is measurable. Its primary high-value users are liquidity providers, hedged participants, and desks or operators who need short-horizon protocol-deterioration signals before those conditions fully express themselves in price, funding drag, withdrawal congestion, or reduced hedge quality. Rather than treating DeFi monitoring as a stream of disconnected charts and alerts, Quantir turns fragmented protocol evidence into a decision-support workflow.

At the MVP stage, Quantir supports an initial set of 11 DeFi protocols through a watchlist-to-dashboard workflow that helps users prioritize monitored protocols, inspect risk movement, review suspicious transactions, examine contract-aware intelligence, and incorporate external context. This initial scope should be framed as a focused operating universe rather than as a fixed ceiling on product coverage.

Architecturally, Quantir is designed as a layered pipeline spanning data collection and normalization, transaction intelligence, hidden private-flow coverage, smart-contract intelligence, risk scoring, and a later hypothesis-inference layer based on an adversarial agent framework. The mathematical risk core produces the protocol risk score first; the AI and agent layer interprets that score through competing hypotheses, explanation, and analyst-facing context rather than generating the core score itself.

Economically, Quantir's value proposition is lead-time. Earlier risk escalation, especially when supported by transaction evidence, contract-aware runtime signals, and forecast context, can preserve the ability to reduce exposure, reconfigure a hedge, or avoid remaining positioned against a deteriorating protocol for too long.

2. Problem and Risk Landscape in DeFi

DeFi protocols operate in an environment where risk can emerge simultaneously from code, liquidity structure, governance actions, hidden capital movement, oracle dependencies, and shifting market conditions. Unlike traditional financial systems, many of these signals are public in principle but fragmented in practice. Analysts often have to combine blockchain activity, protocol metrics, external announcements, and contract behavior manually, under time pressure and without a unified evidence surface.

This creates three practical problems. First, relevant signals are distributed across different technical and informational layers, making it difficult to detect meaningful protocol stress early. Second, not all meaningful activity is visible through the public mempool alone; private orderflow and bundled execution can reduce visibility into critical movements before they affect protocol state. Third, even when raw data is available, translating it into actionable risk interpretation remains difficult without systematized evidence, scoring, and analytical context.

In this context, serious protocol monitoring requires more than token-price tracking or generic anomaly alerts. It requires a system capable of integrating transaction behavior, liquidity changes, governance activity, contract-level intelligence, and external information into a coherent analytical workflow.

3. Why Existing Approaches Are Insufficient

Many existing approaches to DeFi monitoring focus on only one slice of the risk surface. Market dashboards emphasize price and liquidity metrics but often underweight contract behavior and protocol-specific event interpretation. Security reviews may focus on code quality but do not necessarily capture runtime behavior or ongoing operational drift. Alerting tools can detect visible abnormal events, yet still struggle with hidden private-flow execution or with the broader interpretation of what a cluster of events means at the protocol level.

Public mempool monitoring also has clear limits. A protocol-impacting liquidity exit or large coordinated move can be executed through private orderflow or builder-bundle pathways, reducing pre-trade visibility. At the same time, raw anomaly detection without context often leads either to missed material signals or to an alert stream that is too noisy to support operational decision-making.

Quantir's design addresses these limits by combining risk scoring, transaction intelligence, hidden-flow coverage, smart-contract intelligence, forecasting, and AI-assisted hypothesis discussion into one evidence-driven workflow.

This framing also distinguishes Quantir from four baseline categories of existing tools: market and liquidity dashboards, point-in-time contract-review or audit surfaces, generic alerting and anomaly-detection systems, and transaction or mempool-monitoring tools that do not extend into broader protocol interpretation. Quantir's position is that serious DeFi protocol monitoring requires these evidence layers to be connected rather than consumed in isolation.

4. Quantir Solution Overview

4.1 Product Workflow Overview

Quantir is designed around a workflow that moves from portfolio-level prioritization to protocol-level investigation. Rather than forcing analysts to begin from a dense protocol dashboard or a generic market terminal, the platform starts with a watchlist where monitoring scope is defined and then moves into a protocol-specific dashboard where the system's conclusions can be inspected in detail. This progression is central to the product story because it explains how Quantir supports both monitoring breadth and investigative depth.

Detailed UI walkthroughs, metric-by-metric panel explanations, and deeper technical documentation are better maintained as companion pages rather than copied inline into the main narrative. The companion references for this public package are Quantir UI Walkthrough, Use Cases, and DeFi Risk Engine.

Primary High-Value Users

- LPs and hedged participants with live protocol exposure

- users exposed to funding drag, carry compression, or delayed exit cost

- desks or operators who need short-horizon protocol-deterioration signals before market repricing fully occurs

Why They Pay

- because the price of delay is often measurable in funding drag, slippage, reduced hedge quality, or missed withdrawal windows

- because earlier protocol deterioration signals can preserve action choice before the market fully reprices the risk

- because protocol-level risk, TVL context, and corroborating evidence are valuable only when they support a concrete decision rather than remaining passive dashboard data

Example Decision Scenario

- Quantir detects a sharp increase in protocol risk.

- The forecasting layer signals possible near-horizon deterioration in TVL or related protocol indicators.

- Suspicious transactions, contract-aware runtime signals, and hidden-flow evidence confirm that the state change is abnormal rather than purely cosmetic.

- The user reduces exposure, reconfigures a hedge, rotates liquidity, or avoids remaining positioned against a deteriorating protocol for too long.

4.2 Watchlist

The watchlist is the operational front door of Quantir. It is the first workspace a user encounters after entering the application and the place where monitoring scope is defined. Instead of presenting an undifferentiated list of market assets, Quantir begins with a protocol selection surface where users add the DeFi protocols that matter to them and turn the platform into a focused monitoring environment.

This workflow is directly tied to subscription scope. In the current product packaging, protocol capacity is determined by tier, with Free supporting one active protocol, Base supporting three active protocols, and Pro supporting unlimited monitored protocols. Within this framework, the Not Added view exposes the currently available protocol catalog, while the Added view represents the active monitoring set already selected by the user.

Each watchlist row is designed as a compact protocol monitoring card. Its purpose is to help a user compare multiple protocols quickly without opening each dashboard individually. For every tracked protocol, the watchlist surfaces recent and total alert activity, a short summary of the latest event, the current modeled risk trend, token price trend, TVL context, confidence level, and the contribution of governance- and whale-related factors to the current interpretation of risk. Importantly, the watchlist risk line reflects the protocol's own modeled risk movement rather than simple token-market volatility.

This design solves a practical problem for users who monitor many protocols at once. Instead of manually inspecting each protocol in sequence, the user can scan the watchlist, identify which monitored system shows the strongest alert pressure or the most abnormal change in risk conditions, and then open that protocol's dashboard for deeper investigation. In that sense, the watchlist functions as Quantir's triage layer, while the dashboard functions as the protocol-level investigation layer.

For Quantir's primary high-value users, this triage layer also has direct economic meaning. Earlier prioritization preserves the user's set of available actions while exit conditions, hedge quality, and liquidity are still relatively favorable. In practice, that can mean reducing exposure before deterioration becomes obvious in price alone, resizing a hedge before funding drag compounds, or avoiding unnecessary time spent positioned against a worsening protocol state.

At the MVP stage, Quantir supports an initial set of 11 DeFi protocols. This coverage should be presented as a focused starting universe rather than a hard product limit. The longer-term design goal is broader: Quantir is intended to expand protocol coverage through automated onboarding so that newly emerging DeFi protocols can enter the monitoring pipeline and begin generating baseline metrics with minimal manual setup. For larger monitoring operations, this coverage layer is also expected to extend beyond the user interface through API-connected workers or agents.

4.3 Dashboard

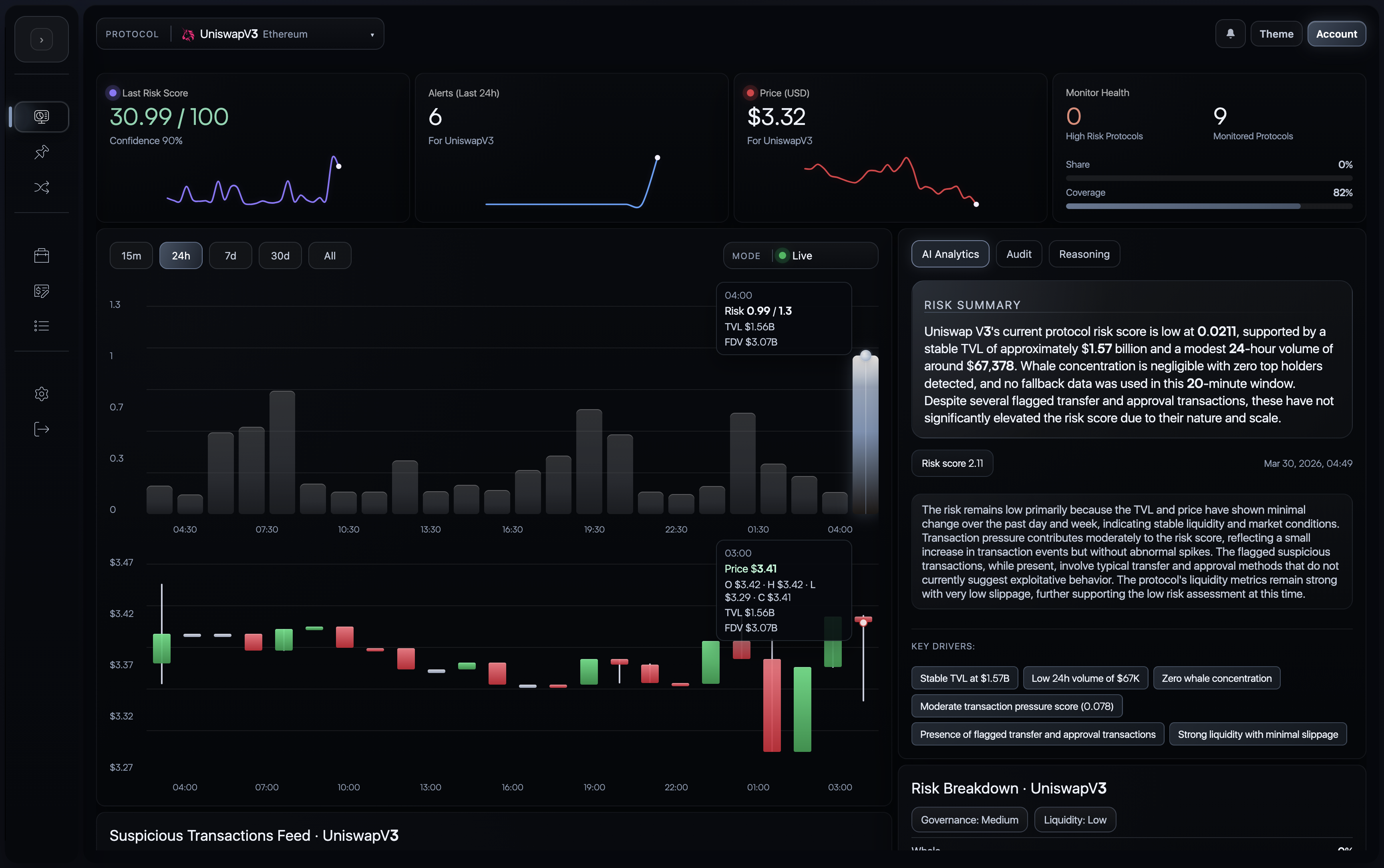

After a protocol has been identified in the watchlist as requiring deeper attention, the user moves into the Quantir dashboard. The dashboard is the platform's primary protocol investigation workspace. Its role is not merely to display a risk score, but to connect that score with the underlying evidence, context, and event flow that explain why the protocol's current state deserves attention.

The first analytical layer of the dashboard is the top summary strip. This section provides immediate orientation by combining the current modeled protocol risk score, a short-term chart of risk movement, alert activity over time, token price behavior, and compact contextual metrics such as confidence, update timing, and monitoring state. The alert view is particularly important because spikes in alert activity signal an abnormal concentration of risk-relevant events, even when those events do not yet amount to a confirmed exploit or failure.

The central dashboard view is an analytical comparison surface composed of two linked visual layers: a risk-history chart across selectable time windows and a candlestick price chart beneath it. This pairing allows the user to examine how modeled protocol risk evolves alongside market price behavior. It is best understood as an evidence-based analytical surface rather than a deterministic claim of causality. Its value lies in allowing analysts to observe where risk escalation and market reaction align, where they diverge, and how protocol stress may propagate into visible market behavior over time.

Below the main chart area, the suspicious transactions feed translates macro-level model movement into event-level evidence. This feed surfaces recent flagged transactions together with source and destination wallets, transaction hash, transferred value, detected method, timestamp, and estimated impact on risk. In practice, this allows the user to move from an abstract protocol-level score to the concrete on-chain events that contributed to that score's movement.

The dashboard also contains an AI Risk Intelligence panel that provides the platform's concise analytical interpretation of the protocol's current state. This is not a separate scoring engine, but a compact explanation layer built on top of the broader evidence surface. Alongside it, the Audit tab provides an automated contract-capability review used for protocol onboarding and runtime enrichment. It highlights important methods, privileged or upgrade-sensitive functions, likely owner and protocol-controlled addresses, and surface-level findings that can materially affect risk interpretation. The module also feeds discovered audit intelligence, including flagged methods, administrative methods, ownership candidates, contract lists, and ABI fragments, back into the monitoring stack. This layer should not be described as a replacement for a full third-party security audit, but it is intended to mature into a highly reliable automated contract-assessment surface over time.

A separate Risk Breakdown panel exposes the colder numerical side of the system's reasoning. Here, the user can inspect factor contributions and protocol-state indicators such as whale influence, governance influence, liquidity influence, confidence, TVL, TVL delta, FDV, token price, market capitalization, and model update timing. These factors all contribute to protocol interpretation, although their relative importance can differ across protocol types and states. This panel is important to the whitepaper because it shows that Quantir does not rely on narrative output alone; its conclusions remain anchored in observable data.

The final contextual layer is the risk-impacting news feed. This block brings in recent governance posts, public announcements, articles, and ecosystem updates associated with the monitored protocol and allows the user to inspect source materials directly. Its value is not simply that it aggregates links, but that it places external context alongside on-chain evidence, contract intelligence, structured metrics, and model interpretation in a single workflow. This reflects a broader design principle behind Quantir: protocol risk is not purely an on-chain phenomenon. It is often shaped simultaneously by governance communication, operational announcements, ecosystem developments, and public narrative.

Taken together, the dashboard is a unified evidence surface for analyst decision support. It combines continuous model output, exact on-chain data, suspicious transaction evidence, contract-aware intelligence, external context, and machine-generated interpretation into one investigation environment. The core promise of this page is not merely monitoring, but detailed, evidence-based protocol analytics grounded in precise observable signals and interpreted through a trained inference model.

In the main whitepaper, one general dashboard screenshot is sufficient. It should communicate the approximate appearance of the application and the fact that Quantir brings score context, charts, evidence, and explanation into one surface. Detailed panel-by-panel UI explanation should live on the companion page instead of being duplicated inline.

For a detailed walkthrough of the application screens, modules, metrics, and panel logic, see Quantir UI Walkthrough.

5. System Architecture and Data Pipeline

5.1 Architecture Overview

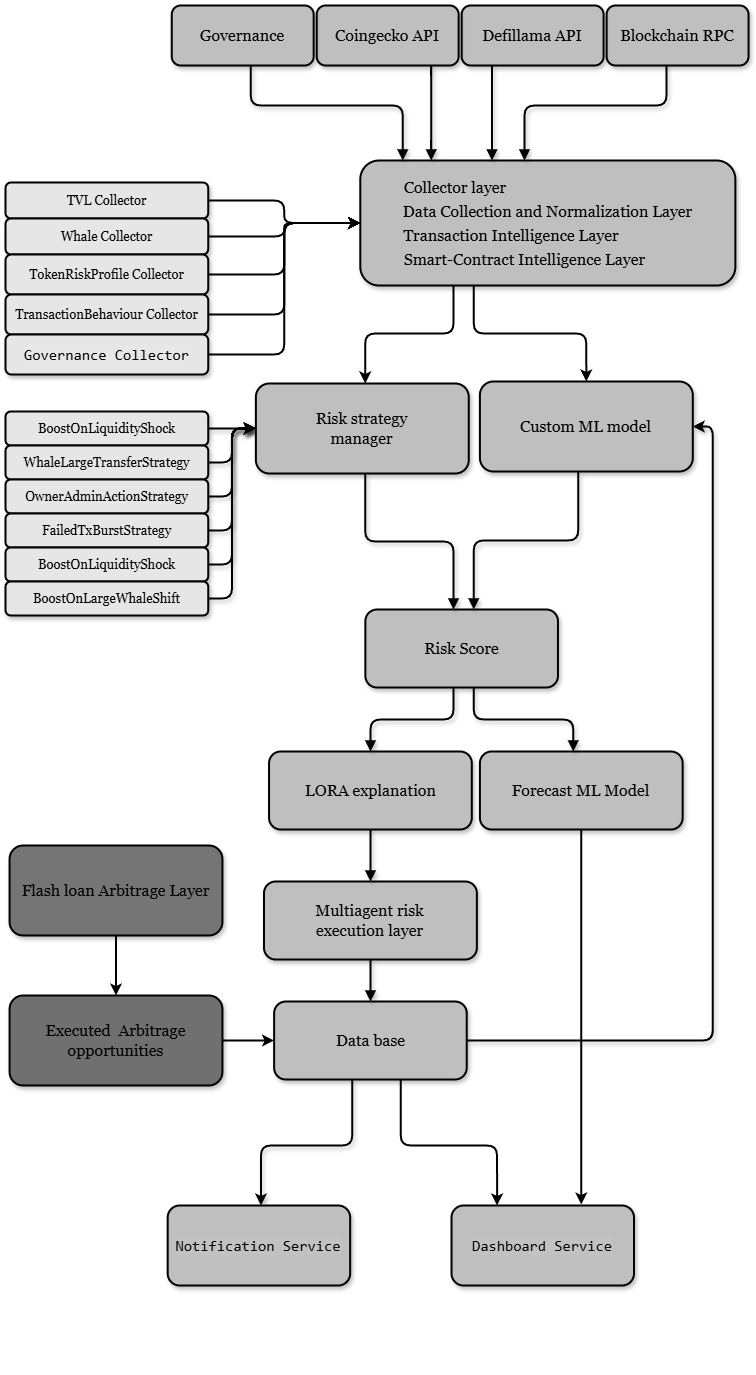

Quantir's system architecture is designed as a layered pipeline that moves from raw collection to structured evidence, then to quantitative risk measurement, and finally to analyst-facing interpretation and delivery. This architecture is best understood as a sequence of interacting layers rather than as a flat set of services.

Detailed implementation documents, extended diagrams, and service-level notes are maintained in companion technical pages rather than copied inline into the main narrative. The technical companion page is DeFi Risk Engine, while applied evidence examples are maintained in Use Cases.

The main architectural order is:

- Data collection and normalization layer

- Transaction intelligence layer

- Hidden private flow detection

- Smart-contract intelligence layer

- Risk scoring engine

- Hypothesis inference layer

- Forecasting layer

- Explanation layer

- Data and evidence store

- Client and alert delivery layer

5.2 Data Collection and Normalization Layer

Quantir's architecture begins with a collection and normalization layer that ingests protocol-relevant data from blockchain infrastructure, protocol data sources, market-data endpoints, governance channels, and external information feeds. The purpose of this layer is not only to fetch data, but to standardize timing, normalize source formats, and prepare the downstream evidence pipeline for consistent scoring and interpretation.

High-level inputs currently relevant to the architecture include:

- on-chain metrics

- analytics of invoked smart-contract functions

- transfer analytics, including hidden-flow or private-mempool analysis

- protocol risk score and predictive / forecasting analytics

- news analysis

At the architecture level, the main source families include blockchain RPC access, protocol-metric collectors, transaction and transfer analytics, ABI and verified-source metadata, governance and news inputs, and monitoring artifacts generated by the audit, scoring, and inference pipeline. Exact refresh cadence and source-by-source timing policy are better maintained in technical documentation rather than overloaded into the architecture narrative.

5.3 Transaction Intelligence Layer

Quantir's architecture includes a transaction-intelligence layer that monitors protocol-relevant activity across public transaction streams, protocol interactions, and post-execution state changes. Rather than treating all on-chain activity as equally informative, this layer prioritizes transactions that materially affect liquidity, governance posture, contract interaction patterns, or protocol state. The output of this layer is structured transaction evidence that can be used by the scoring system, the later hypothesis-inference layer, and analyst-facing workflows.

Examples of architecture-level evidence events that can be mentioned safely at this stage include:

Suspicious Transfer- suspicious smart-contract function invocation

- off-chain news event

- risk increase event

5.4 Hidden Private Flow Detection

In addition to public transaction and mempool monitoring, Quantir is designed to reduce blind spots associated with private orderflow and builder-bundle execution. The hidden-flow layer focuses on three primary evidence classes: sudden TVL dislocations without comparable visible pending activity, abnormal slippage or price impact without corresponding public orderflow, and block-level execution patterns consistent with bundled private activity. Together, these signals improve coverage of protocol-impacting activity that may bypass standard public pre-trade observation.

Additional hidden-flow signals, including missing expected MEV behavior, richer bundle attribution, and stronger multi-signal hidden-flow scoring, remain future extensions rather than established core components.

5.5 Smart-Contract Intelligence Layer

Quantir's architecture includes a smart-contract intelligence layer that begins with a shallow contract-capability audit during protocol onboarding and later supports runtime enrichment. The module combines ABI retrieval, verified-source extraction, ownership inference, heuristic method classification, and compact audit summarization to produce a stored audit artifact for each monitored protocol surface.

The resulting audit artifact is not intended to remain a passive dashboard note. It is persisted into runtime protocol configuration, where discovered method classes, ownership candidates, protocol-controlled contract lists, and ABI fragments are rehydrated into live monitoring logic. Runtime monitoring then evaluates not only whether a function was invoked, but what category of method it was, how much value or affected volume was involved, what flagged status it carried, and how strongly that invocation should contribute to broader protocol-risk logic. When ownership or caller context is available, that context can further sharpen the interpretation of sensitive calls. In this way, contract-aware intelligence moves from onboarding audit to runtime configuration to weighted risk contribution, while still being surfaced in the dashboard's Audit view.

Together, these mechanisms provide contract-aware context to the broader risk-intelligence pipeline without claiming to replace a full formal security audit. The correct framing is runtime risk intelligence over contract surfaces, not formal exploit certification.

In public terms, the stable runtime evidence families are invocation frequency, value-sensitive activity or transferred volume, and flagged-method activity, while exact per-contract method sets remain protocol-specific and are documented more deeply in the technical companion.

5.6 Risk Scoring Engine

Quantir's risk-scoring engine combines normalized evidence from transaction intelligence, market and liquidity signals, governance-related indicators, smart-contract intelligence, and other protocol-state inputs into a unified protocol risk score. This score is intended to serve as the primary quantitative expression of current protocol risk and is computed before downstream hypothesis interpretation. In the broader architecture, the risk score acts as an input to later analytical layers rather than as an output of the agent-based hypothesis system.

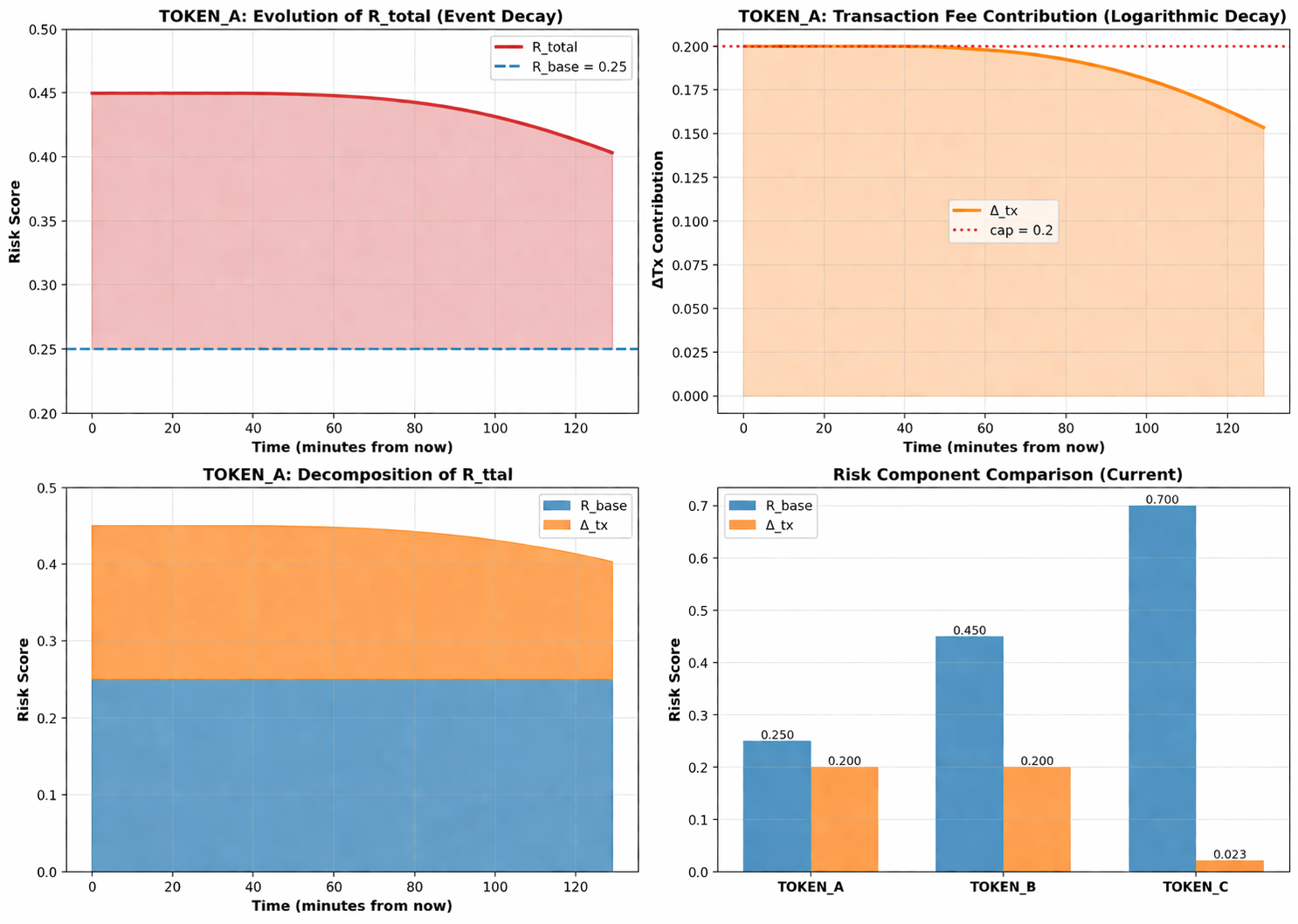

One representative scoring pattern that is already safe to mention is strategy-adjusted transaction pressure. In that formulation, the trained model output remains the base score, while recent strategy-matched transaction events contribute a separate bounded delta rather than replacing the model core. At a high level, the formulation is R_total = clamp01(R_base + Delta_tx), where Delta_tx is normalized relative to protocol size, decays over time, and is capped before being added to the base score. This is important because it shows how Quantir can remain model-driven while still reacting to meaningful transaction pressure.

5.7 Hypothesis Inference Layer

After the protocol risk score has been computed, Quantir applies a hypothesis-inference layer based on an adversarial agent collegium. In the MVP architecture, each candidate hypothesis is represented by two debating agents, one arguing in favor of the hypothesis and one arguing against it, while a global judge evaluates the strength of both sides. This layer consumes the already computed risk score together with broader evidence from transaction intelligence, smart-contract analysis, on-chain metrics, predictive analytics, and external context, and produces hypothesis-level scores, confidence values, and analyst-facing conclusions. Importantly, this layer interprets risk rather than generating the core protocol risk score itself.

Current MVP hypotheses discussed by the agent system:

Liquidity DrainExploit PreparationLiquidation CascadeMarket ManipulationLiquidity Migration

5.8 Forecasting Layer

Quantir is designed to incorporate a forecasting layer that extends the platform from current-state interpretation into forward-looking protocol monitoring. This layer is best positioned as an emerging but already meaningful component of the evidence stack: forecast-aware signals are part of the broader product narrative, while the fuller Chronos-based formulation belongs in the dedicated forecasting section. Architecturally, this layer supplies short-horizon predictive context that complements current-state risk scoring rather than replacing it.

5.9 Explanation Layer

The explanation layer converts raw scoring and inference outputs into analyst-facing interpretation. It is intended to summarize why the current protocol state appears risky or stable, which signals matter most, and how competing hypotheses are ranked.

5.10 Data and Evidence Store

The architecture includes a persistence layer for protocol-state history, evidence events, model outputs, and other analytical artifacts needed for dashboard retrieval, historical comparison, alert delivery, and later evaluation workflows.

This section remains technology-agnostic in the public whitepaper. The important point is that Quantir persists protocol-state history, evidence artifacts, audit outputs, model outputs, and alert-relevant events in a reusable evidence store that supports dashboard retrieval, historical comparison, and later evaluation workflows.

5.11 Client and Alert Delivery Layer

At the final serving stage, Quantir exposes results through the dashboard interface, alerting channels, and future automation or API-connected operational workflows. This layer is where protocol monitoring becomes usable for human analysts and operational teams.

The delivery layer spans the dashboard itself, direct alert channels such as email and Telegram, and later automation or API-connected operational workflows for larger monitoring setups.

6. Risk Model Methodology

Quantir's methodology transforms heterogeneous protocol signals into a unified protocol risk score through a staged evidence pipeline. The pipeline can be described in four steps. First, the system groups inputs into evidence families, including market and liquidity state, transaction behavior, hidden-flow signals, smart-contract intelligence, governance and news context, and forecast-aware features. Second, these evidence families are normalized into comparable analytical inputs rather than treated as isolated alerts. Third, a trained inference model evaluates the combined evidence against historical protocol trajectories, with particular emphasis on failed, exploited, or structurally degraded projects, in order to produce a unified protocol risk score and associated confidence signals. Fourth, the resulting risk score becomes one of the inputs to later hypothesis interpretation rather than an output of the agent layer.

Within this methodology, smart-contract intelligence is meant to function as weighted runtime evidence rather than as static audit commentary. Persisted audit artifacts allow method classes, value-sensitive activity, flagged-method usage, and related ownership context to enter the scoring model as live evidence features. This matters because it ties contract-awareness directly to risk contribution instead of relegating it to a passive explanatory layer.

The same logic applies to transaction pressure. Recent transaction events do not overwrite the base model score directly. Instead, they contribute an additive, bounded, and decaying Delta_tx term whose event severity is normalized against protocol size using FDV or fallback reference metrics. This provides a clear explanation for why Quantir's score can respond to meaningful transaction behavior without becoming an unstable event counter or a purely heuristic alert meter.

Methodologically, the whitepaper should also remain explicit about two boundaries. The first is that privileged activity such as governance actions, upgrades, or administrative operations remains risk-relevant by default even when such activity may later prove legitimate. The second is that contextual cooperation from protocols improves interpretation quality but does not suppress alerts or grant immunity from scrutiny. In other words, Quantir aims to reduce false positives through contextual conditioning rather than by ignoring sensitive behavior.

The methodology also includes signal-family normalization, interaction between static and dynamic evidence, confidence estimation, and the use of caps, decay, and saturation logic in the broader scoring framework. Exact weights, thresholds, and final implementation formulas remain implementation-dependent and are better handled in DeFi Risk Engine.

7. Forecasting Layer (Detailed)

Quantir's forecasting layer is intended to provide forward-looking protocol context rather than only current-state monitoring. It is described here as a Chronos-oriented predictive component that consumes protocol-relevant time series and produces short-horizon contextual signals for risk interpretation. The key boundary is that forecast outputs are probabilistic rather than deterministic: they enrich current-state analysis rather than claim guaranteed prediction of failure or exploit events.

Forecast-aware features may include projected movement in protocol-relevant indicators such as TVL, FDV, token price direction, or broader trend state when those projections are useful for near-term risk interpretation. Exact model configuration, horizon design, and performance claims remain deferred until the Chronos-based implementation path and evaluation methodology are frozen.

8. Agent Collegium and Hypothesis Inference

The hypothesis-inference system in Quantir is based on an adversarial multi-agent framework. Each candidate hypothesis is represented by a pair of debating agents: one arguing that the hypothesis explains the current protocol state and one arguing that it does not. Both sides operate on a shared evidence base that includes the current protocol risk score, on-chain metrics, transaction analysis, smart-contract intelligence, predictive analytics, and external information feeds. A single global judge agent evaluates the strength of both sides, assigns structured scores, and produces normalized hypothesis scores and confidence values for dashboard presentation and analyst-facing interpretation.

This distinction should remain explicit throughout the whitepaper:

Hypothesesare debated by the AI system.Strategiesare the detection algorithms or heuristic pathways used to identify risk-relevant patterns.Eventsare observed evidence signals entering the pipeline.

Current MVP hypotheses:

Liquidity DrainExploit PreparationLiquidation CascadeMarket ManipulationLiquidity Migration

For each hypothesis, the judge produces:

hypothesis_scoreconfidence- short conclusion

- pro-side highlights

- contra-side highlights

- final judge summary

9. Smart-Contract Intelligence (Detailed)

This section expands the architecture-level smart-contract block into a dedicated technical explanation. In practice, Quantir's contract intelligence is operational rather than formal: its purpose is to turn newly onboarded or partially known protocol contracts into immediately usable monitoring artifacts.

At the public-documentation level, the module performs a shallow contract-capability audit for protocol onboarding and runtime enrichment. It is designed to discover privileged and high-risk callable methods from ABI and verified source code, resolve likely owner and protocol-controlled addresses, generate a concise contract-surface summary and findings, persist the result, and push the discovered intelligence back into runtime protocol configuration.

Public-facing capabilities include:

- discovery of privileged and high-risk methods

- heuristic classification into

adminMethodsandflaggedMethods - likely owner and protocol-controlled address resolution

- compact audit artifact with

summary,findings, andconfidence - persistence and reuse of completed audit records

- runtime rehydration of audit intelligence into method-aware monitoring configuration

- method-aware contribution of value-sensitive or flagged invocations to broader protocol-risk interpretation

The module is not intended to provide:

- formal security review

- vulnerability proof or exploit validation

- bytecode-level auditing

- guaranteed upgradeability classification across all proxy patterns

Operationally, the flow is: select root-contract candidates, fetch ABI and verified-source metadata, infer ownership and protocol-controlled contracts, classify methods heuristically, generate a shallow summary through a model path or deterministic fallback path, persist the result, and rehydrate runtime entries with discovered method and ownership intelligence. That rehydrated configuration is then used to interpret later function calls by method class, value sensitivity, flagged status, and ownership context when available. Baseline function categories that can be named safely include upgrade, admin, pause, mint/burn, and token-flow functions. These runtime signals are best understood as dynamic evidence for elevated scrutiny rather than as fixed vulnerability proofs, and exact formulas or thresholds remain out of scope in the public whitepaper.

10. Explainability and Analyst Workflow

Quantir is intended to provide not only scores and alerts, but also a coherent analyst workflow. This workflow begins with watchlist-based prioritization, moves into dashboard-level protocol investigation, and then supports interpretation through risk summaries, suspicious transaction evidence, smart-contract context, structured metrics, and external news. The explanation layer is valuable because it reduces the burden of manually connecting all of these signals under time pressure.

An important boundary should remain explicit here as well: the mathematical risk core produces the protocol risk score and associated confidence signals first, while the AI and agent layer translates those outputs into ranked hypotheses, summaries, and evidence-oriented explanation. Quantir is therefore not intended to behave like an intuition-only LLM wrapper. It is intended to expose a model-driven risk core through an interpretable analyst surface.

An illustrative alert artifact can already be shown safely, even before the final output schema is frozen:

Alert Example (Illustrative)

Protocol: Example Protocol

Current risk change: 18.4 -> 31.7 / 100

Confidence: 0.84

Forecast context: near-horizon TVL deterioration risk elevated

Dominant hypothesis: Liquidity Drain

Top evidence:

- alert pressure spike across recent monitoring window

- suspicious value-moving transfer affecting monitored contracts

- abnormal flagged-method activity in contract-aware monitoring

- hidden-flow mismatch between visible orderflow and protocol-state change

Interpretation boundary:

- elevated scrutiny and action review warranted

- not a formal exploit confirmation

- user should review exposure, hedge assumptions, and exit/liquidity conditionsThe whitepaper describes explainability at the workflow level and includes one illustrative artifact. The exact production schema for analyst-facing explanations remains implementation-dependent until the product output stabilizes.

11. Evaluation Methodology

This section is essential for credibility. Even before full quantitative validation is complete, the document can define how Quantir evaluates detection quality, false positives, calibration, lead-time, and strategy- or hypothesis-level interpretation quality.

The evaluation framework centers on:

- benchmark protocol set

- event taxonomy

- train / validation split logic

- precision / recall / false-positive framing

- calibration of confidence outputs

- lead-time definitions where applicable

Even before full quantitative validation is complete, the whitepaper can define Quantir's evaluation frame at three levels: protocol-level risk scoring, event-level detection, and hypothesis-level interpretation. Protocol-level evaluation should test whether score movement aligns with materially adverse protocol trajectories and whether confidence outputs are reasonably calibrated. Event-level evaluation should measure the precision of flagged transfers, privileged-call detections, hidden-flow indicators, and news-conditioned risk events against reviewed benchmarks. Hypothesis-level evaluation should assess whether the agent judge ranks the dominant hypothesis coherently relative to known incident narratives while still preserving Liquidity Migration or mixed-state outcomes when evidence is weak or conflicting. Historical train and validation design should remain time-aware and outcome-leakage resistant, especially when using failed or degraded projects as part of the reference corpus. Where lead-time is discussed, it should be defined against the earliest materially verifiable adverse protocol signal rather than against retrospective narrative summaries of the incident.

At the product level, lead-time should be framed as an economic metric, not only a research metric. For LPs, hedged participants, and reaction-sensitive operators, an alert is valuable only if it arrives while the user can still change something at materially lower cost than a post-facto price alert would allow. That may mean withdrawing earlier, resizing a hedge before funding drag compounds, avoiding a deteriorating carry trade, or deciding not to remain exposed while liquidity conditions worsen. In this sense, useful lead-time is a measure of preserved action quality.

The evaluation protocol can therefore already define three practical boundaries. A good alert is one that identifies a materially risk-relevant state change with enough protocol specificity and supporting evidence to justify investigation or action review. A false positive is an alert that escalates scrutiny without sufficient materially adverse follow-through or reviewable evidence support. Lead-time is the interval between the system crossing an actionable alert threshold and the earliest materially verifiable adverse protocol signal, measured in a way that preserves time ordering and avoids hindsight leakage.

One published use case is especially useful here. The companion case Multi-Stage Risk Accumulation and Delayed Market Reaction shows a pattern in which modeled protocol risk rises in distinct impulsive phases while price remains locally stable, followed by a later market breakdown after the majority of risk signals have already accumulated. This demonstrates exactly the kind of hidden divergence and delayed market reaction that the whitepaper means by lead-time. The main document therefore references Use Cases rather than reproducing every case image inline.

12. Competitive Positioning and Practical Differentiation

What makes Quantir different in practice is not only that it combines more signals, but that it changes what a user can do with those signals. Simpler systems often stop at charts, flat alerts, or static audit notes. Quantir is designed to connect protocol-level risk with transaction evidence, contract-aware runtime signals, hidden-flow blind-spot mitigation, forecast-aware context, and ranked interpretation inside one triage-to-investigation workflow.

| Capability | Generic dashboards | Alert tools | Audit surfaces | Quantir |

|---|---|---|---|---|

| Protocol-level risk score | Partial or indirect | No | No | Yes |

| Hidden-flow blind-spot coverage | No | Limited | No | Yes |

| Contract-aware runtime evidence | No | Limited | Static only | Yes |

| Forecast-aware context | No | Rare | No | Yes |

| Triage-to-dashboard workflow | Limited | Limited | No | Yes |

| Ranked hypothesis interpretation | No | Limited | No | Yes |

The practical differentiation is therefore workflow-level, not cosmetic. Quantir is intended to help a user move from triage, to investigation, to decision with fewer blind spots and less manual stitching of evidence than simpler monitoring categories usually provide.

13. Business Model and MVP-to-Scale Roadmap

Quantir's commercial logic starts from one concrete buyer pain: the cost of staying positioned against a deteriorating protocol is often measurable before the deterioration is obvious in price alone. This is especially true for LPs, hedged participants, and desks or operators exposed to funding drag, carry compression, withdrawal timing, execution cost, or delayed hedge adjustment. For these users, Quantir is not only a dashboard subscription. It is a decision-support layer intended to preserve reaction time.

A supporting hedging analysis for Ethereum staking and futures is useful grounding for this framing. It shows, at the working-example level, that funding fees, execution costs, and break-even thresholds materially compress net yield even in a structured hedged carry setup. In other words, delayed reaction has observable economics before any catastrophic protocol failure is required to make the monitoring problem expensive.

From that starting point, the tier model follows buyer maturity. Free supports direct-exposure users who need to monitor one protocol closely. Base supports users comparing several live exposures and needing broader triage. Pro is intended for users who need continuous multi-protocol monitoring, deeper alert review, and a richer investigation surface across many monitored systems. Beyond this, the expansion path includes modular analytical tools, broader automation, API-connected workers or agents, custom alert routing, and enterprise packaging for larger operational teams or protocol-side deployments.

This means the monetization path is not only more protocols per tier. It is also a progression from basic monitoring access to deeper alerts, richer evidence, more automation, and eventually workflow integration for professional users and teams.

Future product scaling can expand this model through a dedicated ingestion engine, a distributed node layer, and broader automated protocol onboarding, including the planned deployment of three core nodes.

Commercially, Quantir can address three user groups: reaction-sensitive individual users with live protocol exposure, professional monitoring users who need broader multi-protocol coverage and faster triage, and larger operational teams that may later consume Quantir through automation, API-connected workers or agents, and expanded protocol-ingestion infrastructure. Exact pricing and enterprise packaging can remain a later commercial refinement.

A dedicated stage-by-stage delivery view is maintained separately in Quantir Roadmap.

14. Risks, Limitations, and Responsible Use

Quantir is a risk-intelligence and analytical support system, not a guarantee of exploit prevention or complete visibility. This is especially important in areas such as hidden private-flow interpretation, smart-contract monitoring, and AI-driven hypothesis discussion.

Core limitations include:

- no guarantee of complete hidden-flow visibility

- no guarantee of exploit prevention

- model outputs remain probabilistic

- some architecture layers are still evolving at MVP stage

- protocol cooperation can improve contextual accuracy but does not eliminate risk

Quantir should also state explicitly that the platform does not provide investment advice, legal advice, or a formal security certification of any monitored protocol. Its outputs are analytical signals intended to support monitoring and decision-making, not a guarantee of safety, solvency, exploit prevention, or market outcome. Users remain responsible for their own operational, financial, and compliance judgments.

15. Appendix

The appendix is organized as a practical reference structure for the public whitepaper:

Appendix A. GlossaryAppendix B. Signal DictionaryAppendix C. Hypotheses, Strategies, and Event TaxonomyAppendix D. Formula References and Scoring NotesAppendix E. Figure ListAppendix F. External Academic ReferencesAppendix G. Companion Resources

Within that structure, the appendix includes:

- glossary

- signal dictionary

- hypothesis list

- strategy list

- event list

- formula references

- figure list

- external academic references

External academic references:

- Leveraging AI-powered data streams for predictive risk assessment in cross-protocol DeFi lending platforms

- Machine learning algorithms for DeFi risk assessment

Companion resources: